Introduction¶

The BEAT framework describes experiments through fundamental building blocks (object types):

Data formats: the specification of data which is transmitted between blocks of a toolchain;

Algorithms: the program (source-code or binaries) that defines the user algorithm to be run within the blocks of a toolchain;

Libraries: routines (source-code or binaries) that can be incorporated into other libraries or user code on algorithms;

Databases and Datasets: means to read raw-data from a disk and feed into a toolchain, respecting a certain usage protocol;

Toolchains: the definition of the data flow in an experiment;

Experiments: the reunion of algorithms, datasets, a toolchain and parameters that allow the system to run the prescribed recipe to produce displayable results.

Instances of these building blocks are represented in JSON using a specific schema. Multiple objects can be stored on disk as files following a directory structure.

A Simple Example¶

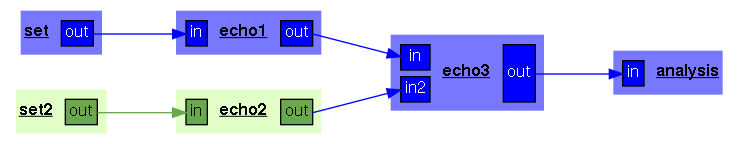

The next figure shows a representation of a very simple toolchain, composed of only a few color-coded components:

To the left, the reader can identify two datasets, named

setandset2respectively. They emit data (of, at this point, an unspecified type) into the following processing blocks;Following the datasets, two processing blocks named

echo1andecho2receive the input from the dataset and emit data into a third block, namedecho3;The final component receives the inputs emitted from

echo3and it is calledanalysis. Because this block has no output, it is considered a final block, from which BEAT expects to collect experiment results (that, at this point, are also unspecified).

The toolchain only defines the very basic data flow and connections that must

be respected by experiments. It does not define what is the type of data that

is produced or consumed by any of the existing blocks, the algorithms or

databases and protocols to use. From the toolchain description, it is possible

to devise a possible execution order, by taking into consideration the imposed

data flow. In this simple example, the datasets called set and set2

may yield data in parallel, allowing the execution of blocks echo1 and

echo2. Block echo3 must come next, before the analysis block, which

comes by last.

In typical problems that can be implemented in the BEAT, datasets are

composed of multiple instances of raw data. For example, these could be images

for an object recognition problem, speech sequences for a speech recognition

task or model data for biometric recognition tasks. Computing blocks must

process these data by looping on these atomic data samples. The color-coding in

the figure indicates this extra data-flow information: for each dataset in the

drawing, it indicates how blocks loop on their atomic data. For the proposed,

toolchain, we can observe that blocks echo1, echo3 and analysis

loop over the “raw” data samples from set, while echo2 loop over the

samples from set2.

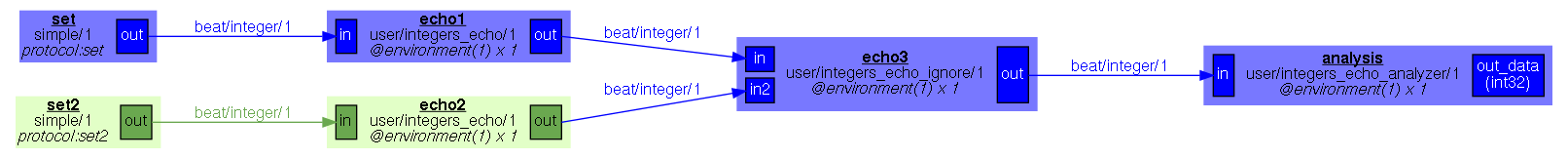

The next figure shows a complete experimental setup for the above toolchain.

The input blocks use a given database, called simple/1 (the name is

simple and the version is 1), using one of its protocols called

protocol. Each block is set to a specific data set inside the

database/protocol combination. Both datasets on this database/protocol yield

objects of type beat/integer/1 (a format called integer from user

beat, version 1), which are consumed by algorithms running on the next

blocks. The block echo1 uses the algorithm user/integers_echo/1 (an

algorithm called integers_echo from user user, version 1) and

also yields beat/integer/1 objects. The same is valid for the algorithm

running on block echo2.

The algorithm for block echo3 cannot possibly be the same - it must deal

with 2 inputs, generated by blocks looping on different raw data. We’ll be more

detailed about conceptual differences while writing algorithms which are not

synchronized with all of their inputs next. For this introduction, it suffices

you understand the organization of algorithms in an experiment is constrained

by its neighboring block requirements as well as the input and output

data flows determined for a given block.

Block echo3 yields elements to the algorithm on the analysis block,

called user/integers_echo_analyzer/1, which produces a single result named

out_data, which is of type int32 (that is, a signed integer with 32

bits). Algorithms that do not communicate with other algorithms are typically

called analyzers. They are set-up on the end of experiments so as to

produce quantifiable results you can use to measure the performance of your

experimental setup.

Design¶

The next figure shows a UML representation of main BEAT components, showing some of their interaction and interdependence. Experiments use algorithms, data sets and a toolchain in order to define a complete runnable setup. Data sets are grouped into protocols which are, in turn, grouped into databases. Algorithms use data formats to defined input and output patterns. Most objects are subject to versioning, possess a name and belong to a specific user. By contracting those markers, it is possible to define unique identifiers for all objects in the platform. In the example above, you can identify some examples.

![digraph hierarchy {

graph [compound=true, splines=polyline]

node [shape=record, style=filled, fillcolor=gray95]

edge []

subgraph "algorithm_cluster" {

1[label = "{Dataformat|...|+user\n+name\n+version}"]

2[label = "{Algorithm|...|+user\n+name\n+version\n+code\n+language}"]

6[label = "{Library|...|+user\n+name\n+version\n+code\n+language}"]

}

subgraph "database_cluster" {

graph [label=datasets]

3[label = "{Database|...|+name\n+version}"]

4[label = "{Protocol|...|+template}"]

5[label = "Set"]

}

subgraph "experiment_cluster" {

graph [label=experiments]

7[label = "{Toolchain|+execution_order()|+user\n+name\n+version}"]

8[label = "{Experiment|...|+user\n+label}"]

}

1->1 [label = "0..*", arrowhead=empty]

2->1 [label = "1..*", arrowhead=empty]

2->6 [label = "0..*", arrowhead=empty]

6->6 [label = "0..*", arrowhead=empty]

4->3 [label = "1..*", arrowhead=odiamond]

5->4 [label = "1..*", arrowhead=odiamond]

5->1 [label = "1..*", arrowhead=empty]

8->7 [label = "1..1", arrowhead=empty]

8->2 [label = "1..*", arrowhead=empty]

8->5 [label = "1..*", arrowhead=empty]

}](_images/graphviz-bb15668e320f409b843ec309d3bab0d5391ac838.png)

The BEAT framework provides a graphical user interface so that users can program data formats, algorithms, toolchains and define experiments rather intuitively. It also provides a command-line interface which is used in parallel with the GUI for running experiments, creating, modifying, or deleting objects and interacting with the BEAT platform.